Acknowledgement: I found this “dailyhypervisor.com” blogpost very helpful in writing this piece up relative to my own environment:

http://dailyhypervisor.com/vcloud-automation-cetner-amazon-ec2-configuration/

As I had already setup both vCenter and vCloud Director as computer resources for vCAC, I thought I would start to look outside of the VMware family of technologies. vCAC comes with out of the box support for Amazon EC2 – so I thought I would give that a try before looking at foreign virtualization platforms such as HyperV and Xen. To get my vCAC aware of them means for me a trip to the colocation facility to setup those hypervisors. Whereas setting up Amazon EC2 should be doable from the comfort my office chair…It’s perhaps worth stopping for a moment and thinking front Amazon EC2 access via vCAC might worthwhile in doing. There are many reports of people in large corporates yanking out the credit cards and paying for compute capacity on Amazon. The story goes something like this:

Manager: How long will it take to spin up these resources to get the project started

Developer: Dunno boss… Despite “The Infrastructure” guys having internal virtualization it still takes them ages to get anything done… Things seemed to be quicker when I had a bunch of PC under my desk…

Manager: Have you forgotten already that apps developed on PC’s don’t tend to scale. So we just sit about and wait for them?

Developer: Well, you could get the project of the ground using your credit card and Amazon?

Manager: How much that cost?

Developer: Not much. Pay-as-you-go. Turn it off when were done…

Manager: Here’s my credit card…

The said manager/developer then exposes the company to unseen cost, and unseen risks – as the developer keys in data that’s worth thousands, millions, billions of dollars to the company. The Manager then reads a new article called US report warns on China IP theft… For the record I find such tales somewhat alarmist. If your a US company and your data is in the US, some might say the Manager/Developer are being “imaginative” in finding a workaround to there problem.

I’ve personally never used Amazon EC2 before – so I set about creating a user account there, and just looking at their own packages and UI for creating new VMs. It is possible to setup a free user account, and use “Micro.Instances”. There are number of pre-packages templates that qualify for “free” usage so long as you don’t exceed the maximum usage amounts per month. I think that could be quite useful for folks in the vCommunity who want to play with vCAC and its integration with Amazon. The free account does require you to register a credit card with Amazon, but it doesn’t get billed so long as you run within a certain constraints:

- 750 hours of Amazon EC2 Linux Micro Instance usage (613 MB of memory and 32-bit and 64-bit platform support) – enough hours to run continuously each month

- 750 hours of Amazon EC2 Microsoft Windows Server Micro Instance usage (613 MB of memory and 32-bit and 64-bit platform support) – enough hours to run continuously each month

- 750 hours of an Elastic Load Balancer plus 15 GB data processing

- 30 GB of Amazon Elastic Block Storage, plus 2 million I/Os and 1 GB of snapshot storage

There’s a couple of ways of looking at this 750hrs. You could view at as one instance for 750hrs OR as 750 provisioning tests you could do via vCAC so long as each instance lived for just an hour. Remembering this is going to be important if you don’t want your credit card to be charged unexpectedly. Just sayin’…

There are some of bits and bobs chucked in a long the way – for more details consult – http://aws.amazon.com/free/. Everything in Amazon is handled via a web-browser and I had problems using Chrome for some actions – for example the built-in Java based SSH Client didn’t work, but it did work fine with FireFox. This seems to be an increasing issue – I seem to spend my life bouncing around web-browsers with different web-based systems I manage – having to remember that A works with XBrowser, but B works with ZBrowser…

When you request an new “instance” (or virtual machine as we would normally call it) you will be confronted by a wizard like below. This ones marked with yellow star indicate they are free to use under the free account T&Cs. Of course, Amazon do have a “marketplace” were often there are pre-built appliances. Many of these a billable by the hour of usage – but often they are as little a couple of US cents to use.

Of course what I’d prefer to do is have more control via vCAC to Amazon. So I could wrap my manager/developer folks up in neat audit bundle, and impose my own controls on how they grift the companies expenses for their own purposes.

Step1: Adding Credentials from Amazon to vCAC…

1. As with other “endpoints” the first step is to supply credentials to vCAC to allow it to authenticate to the endpoint (vCenter, vCloud Director, Amazon, Windows 2012 HyperV, Xen and so on ). Unlike vCenter or vCloud Director its not the raw username/password for the Amazon EC2 account that you use. In fact what you need is your “Security Credentials”. These are accessible under you username in the AWS Console:

2. In the “Security Credentials” page, scroll down to “Access Credentials“. If you are new user you will find that table is likely to be blank. You can click at the link “Create a new access key” to generate a new access key (now there’s a surprise!)

3. The Access Key ID value (in my case beginning AK…) needs to be copied into the Username portion when setting up Credentials in vCAC (found under vCAC Administrator, Credentials and New Credentials). The password field needs to be completed with the string from the “Secret Access Key” in Amazon, displayed by clicking the “Show” link (now there’s another surprize). Save your changes by clicking the Green Tick in vCAC.

Step2: Creating an Endpoint in vCAC…

1. The next step is add Amazon EC2 in as endpoint in vCAC. To do this open the vCAC Administrator, select Endpoints – and then click +New Endpoint – selecting Amazon EC2 from the pull-down list

2. Normally, at this stage in adding endpoint you’d expect to specify a URL/FQDN or path to service – that’s actually done a little bit later in the Reservations and Provisioning Group setup. Right now, vCAC only needs to know the Type and the credentials needed to access it:

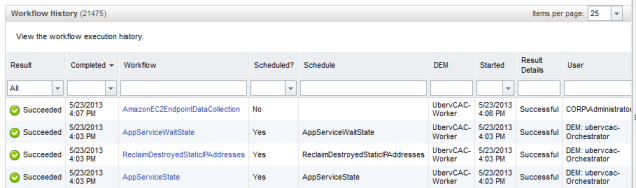

If you have been successfully authenticated, then you should find that vCAC inspects Amazon EC2 to find out the resources available. This should mean you have successful event in the “Workflow History” under vCAC Administrator called “AmazonEC2EndpointDataCollection” which should be marked as “Successful”

Step3: Creating an Enterprize Group…

As with our other endpoint compute resource we can associate or create a group associated with our Amazon EC2 connection.

1. You create an Enterprize Group under the vCAC Administrator node, Enterprize Groups and +New Enterprize Group. vCAC should enumerate all the Amazon “regions”…

A quick look at my account in Amazon indicated my defaut location was West-2 – that did make wonder if anyone who actually uses Amazon cares too much. I guess if you were using Amazon to run production workloads you might want to distributed them around the country to reduce latency and vulnerabilities to outages.

2. If you select ALL the regions in the vCAC pull-down list – it possible when you setup a reservation and assign that to a Provisioning Group to limit which regions the vCAC users can access… So I could make the vCAC Provision group for “CorpHQ – Production” limited to accessing US-West availability zone 2a, 2b, and 2c…

Step4: Create a “Cloud” based Reservation

In my previous posts – both vCenter & vCloud Director reservation were created using the “Virtual” type of reservation – in this case we will use “cloud as the type for the first time.

1. In the Enterprize Admin node, select Reservations

2. Select +New Reservation and Cloud

3. From the “Compute Resource” pull-down list – select the region you wish to allocate:

4. From there we can give the reservation a name, assign the provisioning group, and set limits on the number of VMs they can creates as well as the priority value.

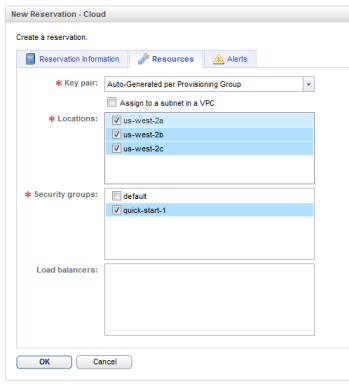

5. Under the “Resources” tab you should see options concerning key pairs, sub-locations, and security groups. These are common options in the Amazon EC2 environment, and if you have signed up for an account with Amazon EC2 and created a new instance – you will have seen some of these properties before. Key Pairs are used to automate the access to an Amazon instance for protocols like SSH. It allows the creator of the instance to use a certificate .pem file to open a session to the instances public Amazon FQDN, and get root level access without needing to know the root password. You can provide keys that were generated earlier or ask Amazon to generate keys that cover the entire Provisioning Group, or create a key for each instance created.

Within each Amazon location (Amazon West-2) there are sub-locations – which are also referred to by Amazon as “Availability Zone”. Security Groups control firewall rules that are applied to an instance that is created in Amazon EC2. By there are Security Groups available in Amazon EC called “Default” and “Quick-Start-1”. Default more or less allows all communication into the instance, whereas default is limited SSH 22, in my case I added support for port80 and 443. Creating a new Security Group in Amazon EC2 is very easy. You just click “Create Security Group”, and the pull-down list offers a series of readily available TCP ports to open:

Step5: Create a Blueprint for Amazon EC2 Instance…

The next step we need to do is associate a vCAC Blueprint with an Amazon EC2 instance. We need to be a little bit careful to select an instance that is both available in our selected region and also qualify for the free use.

1. Under Provision Group Manager, and Blueprints – click New Blueprint and Select “Cloud“

2. After you have given the Blueprint a name, select the Build Information tab – for Blueprint Type select Server and for Provisioning Workflow select CloudProvisioningWorkflow. You can then use the … button to browse for Amazon Machine Image:

Absolutely everything on Amazon gets listed (5,000k_), so I found myself using the filter expression builder to create a filter that listed just the images that contained aws-marketplace as source, containing “wiki” as string in my region of US-West-2. By default the filter works with “begins” with so I need to add to the filter the expression to filter “source” with string “wiki”.

What’s interesting about the Amazon Marketplace is that its much easier to browse in a webpage, but none of the submissions in the market place appear to have their AIM ID. If that was easy to find you’d just use a webpage to find what you were looking for and filter on that parameter. In short I found it VERY difficult to find the right AIM in the list, mainly because Amazon makes difficult to find them. It’s almost as easy to deploy from Amazon, find out the AIM value – and keep that for vCAC. The way to do that is find what your looking for usingt he “Classic Wizard”

Select it an in the next page – make an note of the AIM ID value

Step6: Deploy!

Once the blueprints have been defined – members of the Provisioning Groups can see the Amazon AIM Images made available. I’ve chosen different icons and used descriptors and names that allow me to easily tell where the VMs/instances are sourced. There’s nothing obligating me to do that. My consummers can have no idea of which cloud type their are running their VMs in – public, private, vCloud, Amazon…

Once the instances have generated a user can start to connect to them. That requires some work if you are doing the connection from Windows and PuTTy the Linux instance.

For Linux Instances:

1. You will need to download the certificate for that VM, to establish an SSH Session

2. Next PuTTygen needs to be used to convert the certificate key into a format it can use. Using “Load” to load-up the <machinename>.pem file:

3. Followed by clicking the “Save Private Key” button to save it as .PPK file. You can provide a passphrase to this export/conversion process such that only if you know the password to the private key are you allowed access…

4. Next crank up PuTTy and navigate to +Connection, +SSH and +Auth – and browse for the .PPK file

Next we need to provide the hostname to connect via PuTTy. Clearly, “corphq012” not the name of the instance as understood in Amazon EC2. To find out the public name from vCAC the consumer needs to edit the machine, and look in the “Network” page to locate the name:

5. You should be still challenged for a username, but will not require a password. This should default to ec2-user

Note: If you working from Linux/Mac you should be able to use a command-line version of SSH specifying the .PEM file natively, and connect that way.

Connecting to Windows:

By default RDP is enabled in any Amazon EC2 instance – so all you need is the FQDN and the randomly generated password for administrator account – it’s worth double-checking your security group(s) do actually allow inbound 3389 access for RDP sessions. As with the Linux instance, you can retrieve these values by editing the VM:

I actually struggled with vCAC link to “Connect using RDP” – as RDP kept on wanting to use my “CORP” domain credentials – I found it much easier to run MSTSC manually, or use Remote Desktop Connection Manager instead. I also found that the RDP client for the mac worked out of the box.