Disclaimer:

In my lab I only have 4-NICs per physical box. Whilst this is quite good for a homelab, its not perhaps “production” ready. In my experience most virtualization servers that are based on 2U or 4U equipment generally have more NICs than this. I would say if were going to kick the tyres on Windows Hyper-V in your homelab you would ideally have more NICs than you would consider with vSphere. Of course, if you virtualization host has only one NIC you will have your work cut out experimenting with NIC Teaming on either platform. 😉

Update:

I recently had the need to add additional hosts to my SCVMM environment. This was a new cluster in the same network site. So as an addendum to this post – I covered the many, many steps required to complete those tasks.

Occasionally, it feels like that this series of blogposts are me having all these horrible experiences of Microsoft Virtualization – so you don’t have to go through the pain. But I am thinking about the challenges of anyone in their homelab trying to get their heads round the product. Watch out for the number of NICs you have, don’t expect the same level of ease of use you get with VMware vSphere…

Addendum: Adding new hosts to a pre-configured environment

In my early use of Windows Hyper-V R2 it quickly became apparent that few folks would manage the Microsoft hypervisor with just Hyper-V Manager and use standard virtual switches. What was more likely would be for them to use the “Logical Switch” available in SCVMM. The “Logical Switch” is analogous to the VMware vSphere Distributed Switch only in that it allows for centralized management. However, as I’ve worked with the logical switch over time, I’ve begun to feel they are subtly different. That’s important – not good, bad, just different.

For me the interesting distinction is whereas vSphere Standard and Distributed Switches are two totally independent virtual switch constructs, the Windows Hyper-V logical switch merely adds additional management options to Microsoft virtual switches. If it helps call the logical switch a virtual switch with bells on. The standard Windows Hyper-V virtual switches support functionality such as a “private” or “internal” virtual switch that restricts communication to just the VMs on the Windows Server or between the VMs and the Windows Server. These types of virtual switch are only available from the Hyper-V manager. So if you want to use a combination of all three (internal, private and external) you will need to switch from Hyper-V Manager to SCVMM to do your SysAdmin work. It’s worth stating that these Internal/Private switches are of little use to production environments, and probably only of interest to those working in a test/dev environment.

Before I begin with the setup routine, it’s perhaps worth retelling briefly the shaggy-dog story that is my Windows Hyper-V network. My initial intention was to mirror my configuration that I normally do with VMware ESXi. I have four NICs, and I would normally create two virtual switches – one to carry my infrastructure traffic (management, IP storage, HA Heartbeat, vMotion), and another virtual switch dedicated to the virtual machines. Sadly, I was unable to carry out this configuration with Windows Hyper-V because the cluster “Validation Wizard” would not pass my network configuration. Instead I had to dedicate a single NIC to each type of network activity. At this point I was beginning to wonder if the real agenda behind Microsoft’s avowed endorsement of “converged networking” is a reflection of a much more brutal reality. In order to meet Microsoft pre-requisites you need a significant number of NICs – and doing that with conventional gigabit dual-port or quad-port cards is going to be an expensive operation. You know the port on the physical switch isn’t free (even if it doesn’t come out of your cost center) – I’ve always said take the price of the physical switch, and divide by the number of ports – that’s how much each of those cost you. I think this is an important design consideration for those who find converged networking and blades is beyond their budget, and they are building out a virtualization layer with conventional 2U and 4U servers.

You can reach the wizard for creating a logical switch under “Fabric” in the left-hand corner of SCVMM. If you have setup SCVMM ability to manage VMware vSphere you will see both a pre-defined logical networks (in my case called “corp”) as well as any VMware Distributed vSwitches. As part of my research I’ve been investigating options for V2H and H2V (vSphere to Hyper-V and Hyper-V to VMware) conversions (more on that on a future blogpost).

There are a number of stages in separate wizards that the SysAdmin has to go through to creating a logical network. The creation of these networking constructs generates dependencies as you would expect, and they do have to be done in a particular order. There’s nothing hard and fast here, but you can’t do step 3 or 7 until you have completed steps 1 or 6 – and you can’t do step 6 until you have done step 5. Step 9 can be carried out between each step depending on the state of your constitution. 🙂

- Create the Logical Network – where you can define “Network Sites”

- Create the Network Sites – these are where your VLAN definitions are held, and you can have many VLANs per site.

- Create an IP Address Pool – which is associated with a site – required if you want VMM to assign a static IP address when deploying templates

- Associate Physical NIC to Logical Network(s)

- Create a VM Network

- Create a Port Profile

- Create a Logical Switch

- Associate Physical NIC to Logical Switch

- Create a VM!

- Have a little lie down. Take a deep breath. Keep taking the medication. At times this will feel that your making a lot of different components that you then have to glue together to make networking function.

1. Creating the Logical Network

Note: The main take away here is the Logical Network is where your VLAN definitions are held.

The “Create Logical Network” wizard allows you to define the type of communication you want to use. It was my intention to put my VMs on to VLAN12 that is used by CorpHQ Organization using VLAN Tagging. This is perhaps the most common of virtualization network configurations at this point in time, where VLAN Tagging is used to put different VMs on different networks even though they all share the same physical network. Packets are tagged with a VLAN identifier that is sent down to the physical switch, and then a physical switch routes the packets to the appropriate broadcast domain.

Next you’re required to select which hosts will have access to this logical network, and set the VLAN ID and IP subnet associated with this network. The Add button does allow you to add more “sites”. The relationship is one logical network with many sites associated with it. I guess what Microsoft is expecting is that the wizard is going to be used to manage many Windows Hyper-V hosts in many sites, with each site having a collection of VLANs associated with it.

Personally, I’m not a fan of this sort of “serialization” naming convention of “CorpHQ-ProductionLogicalNetwork_0. I quickly dropped it to reflect the resource that was actually being consumed. So in my case I defined one site (CorpHQ-Network) with many VLANs contained in the site (VLAN12/13/14/15) with their associated subnets (172.168.5.0/6.0/7.0/8.0)…

2. Creating an IP Address Pool

Note: The main take away is IP address pools are optional, and only used when you provision new VMs from a template.

My next step was defining pools IP address and associating them with the Site-VLAN’s I’d previous defined. This is an optional configuration and I guess the equivalent feature in vSphere are IP Pools on Distributed vSwitch in vSphere, or IP Pools as defined in vCloud Director. You can define an IP Pool per Site-VLAN by running the “Create IP Pool” icon on the ribbon bar, or by right-clicking a logical network in the list and selecting create IP pool.

Once appropriately labelled, you can then select which Site-VLAN is to be associated with the IP Pool.

And from there you can specify a range of IP addresses, DNS and WINS (people still use WINS?!?!)

Once you have whizzed through the IP Pools wizard a couple of times the “logical networks” pane updates to show the associated IP Pools.

3. Associate Physical NIC with Logical Network

Note: The main take away is logical networks must be associated with a network adapter in order for their settings to be presented to the VM.

The next stage is associating all this logical stuff with the physical stuff – a process that is one and the same when you define a VMware Standard vSwitch or Distributed vSwitch. I initially found this confusing, as I couldn’t actually see the properties. That’s because when you right-click a Windows Hyper-V host, and check its hardware properties everything is expanded by default, and the hardware page shows all hardware, not just networking which was my primary concern.

A judicious use of the [-] helped me regain some focus on the task in hand – associating the physical NIC to the logical network. I found my ethernet3-VM adapter was already associated with the default logical network; so I had to assign it to the new networks that I’d defined.

4. Create VM Networks

Note: The main take away here is that the “VM Network” object is what the VM interfaces with. When you configure a VM for network, you’re plugging into a “VM Network”.

The first thing to mention about VM Networks is that they are NOT to be found in the “Fabric” view where most networking components are to be found under the networking node.

Instead, the definition of “VM Networks” is to be found under the “VM and Services” view. It’s to a “VM Network” a virtual machine is actually configured – not to a logical network, IP pool or indeed the Site-VLAN. This leads to an interesting level of abstraction – because you can call these VM Networks anything you like, and folks using them are isolated from the underlying configuration. To a degree this is no different from a portgroup on VMware vSwitch – the portgroup could be called “Production” for all we care, with the VLAN tagging backing it being 12. If you have vCloud Director deployed in your vSphere environment you get even more abstraction.

Existing “VM Networks” can be viewed in the (drum roll) “VM Networks” node in SCVMM, and added with (another drum roll) the “Create VM Networks” button.

In the second part of the wizard you can select the Site-VLAN configuration created earlier.

This screen grab shows Windows Hyper-V 2012 where the VLAN assignment were per-VM. Incidentally, this couldn’t be done at the time of creation, but only after the VM had been created…

5. Create Port Profiles

Note: The main takeaway is you must create at least one port profile before you can create a logical switch. Once defined port profiles can be changed but not radically so. For example if you create a NIC Teamed port profile, it can be converted into an uplink only profile.

Port Profiles hold network capabilities and settings – and they can be applied to either physical or virtual NICs. The intention is to allow for the easy reconfiguration of those settings from one location. You’ll find the port profiles located in the “Fabric” section. By default Microsoft has populated SCVMM with many “virtual” port profiles for use with Windows Hyper-V virtual machines, but there are no “physical” port profiles defined which control how the physical uplinks are utilized. It’s these port profiles that stop you from having to define what type of “NIC Teaming” algorithm is used for the physical network. However, on each Windows Hyper-V server you must still assign which NICs will be used as part of the team. That’s carried out at Step 7 after the logical switch has been defined.

When you define a port profile you will need to assign it a name, and select the option for an “Uplink Port Profile”, you can then select the load-balancing algorithm (Transport Port, IP, MAC, Hyper-V Port, Dynamic) and the teaming mode (Static Teaming, Switch Independent, LACP).

In the second part of the wizard you can select which Sites (which includes the VLAN defintions) you wish this port profile to be applied to, and you also have the option to enable Microsoft Hyper-V Network Virtualization.

You can remove port profiles, but watch out for dependencies. If you try to rip out a port profile the action is likely to fail as this object references others. This is the type of error message you would get if you just tried to delete a port profile that was in use elsewhere. You can rename port profiles if you want to, but you cannot rename logical switches once they have been assigned to a Windows Hyper-V host

6. Create Logical Switch

Note: The main take away here is you must create a switch in order to create a VM, whether that be standard switches on the Windows Hyper-V hosts (external, internal, private) or a logical switch in SCVMM. The logical switch also holds settings as well such as support for SR-IOV and what port profiles (if any) will be used. What ever you do. Don’t start the process of creating a Logical Switch, by creating a logical switch! I know that’s sounds screwy, because it is. There are lots and lots of other configuration tasks that must be done first before you get anywhere near you end goal. The Logical Switch Wizard makes this plain to see:

Again, logical switches are defined in the “Fabric” part of SCVMM. It might be worthwhile checking your naming convention for the logical network – in an attempt to be consistent.

Next we need to decide what “extensions” we require on the logical switch:

In the uplink part of the logical switch wizard, we need to decide if this switch will support NIC Teaming, and what port profile will be associated with it. In my case I’ve run out of NICs to do this for real. But I’m going to select it anyway, so I can take screen grabs. So “Uplink Mode” is switched to “Team”, and the “Add” button is used to add in my “pNIC Teaming” port profile that was created in Step5

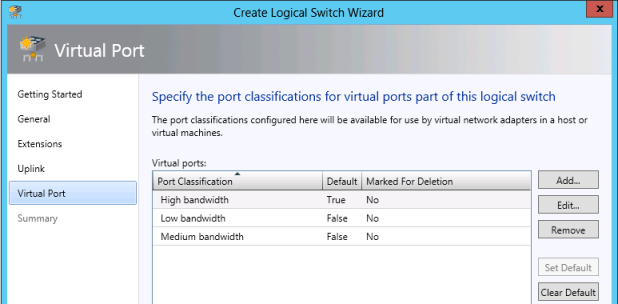

Next we can choose which virtual port profiles can be accessed via the logical switch. These virtual port profiles are utilized by the VMs, not the physical host. In my case I decided to include the port profiles that control the amount of bandwidth allocated to the VM. If yet to figure out how these actually work – and its something I want to look at in detail at later date…

Initially, when I clicked Finish on the summary page, an error occurred because I had not successfully configured these virtual ports

This happened because these bandwidth based port profiles need both a virtual AND Hyper-V port profile to function. Although the logical switch was created, the virtual port configuration had been stripped out. I needed to go back in and select both. Sadly, this dialog box does not support multi-select, so I had to do each one individually. I was a bit surprised to find that the requirements of virtual ports are checked in the wizard – and that I was able to add in a virtual port without its required Windows Hyper-V port profile.

7. Assign Physical NIC to Logical Switch

Note: The main take away is that the logical switch is NOT the place where the Windows Hyper-V is assigned to the logical switch. Each Windows Hyper-V host needs to be allocated to the logical switch once it has been created.

The next stage is to change the properties of each Windows Hyper-V host and add the logical switch into its configuration, and additionally specify which network cards the logical switch will use. I must admit I felt a certain “deja vue” after all, hadn’t I assigned a physical NIC to the logical network in Step 3?

After right-clicking a Windows Hyper-V host and selecting the properties option, you can then select the “virtual switch” option. By default the Windows Hyper-V host will not have any virtual switches assigned. Using the +New Virtual Switch button will allow you to add in either a Standard Switch or our newly created logical one.

As I only have one logical switch this is added in by default, and if I want NIC Teaming I need to select the physical NICs that will be used as part of the system. I noticed that this part of the dialog box reveals the physical card name, not the logical name assigned to it (eth0-management, eth1-heartbeat and so on). So you will need to be careful here that you don’t enrol (or enrol if your from the US/Canada!) the wrong physical NICs into the team.

When you click OK you will receive this warning:

You must assign logical switches in this way, or else when you come to create a VM you will receive a warning indicating that the Windows Hyper-V server doesn’t have access to any virtual switches.

8. Create VM!

Note: One thing you’ll notice is you cannot use the IP Pool settings when defining a VM from scratch, the pool is only used when you’re creating a VM from an existing template.

Conclusions:

The other aspect I’m conscious of is that with so many objects each one requires a label. We need a naming convention that can accommodate the creation of:

- Logical Networks

- Site-VLAN names within logical networks

- IP Pools

- VM Networks

- Port Profiles (for both virtual and uplink if your using both)

- Logical Switches

Most of these objects respond well to being renamed if you haven’t planned out a convention, except for the logical switch itself. Once it is assigned to a host, you’re pretty much stuck with it. This separation of different objects holding different settings creates a rich set of dependencies. So if you going to use Windows Hyper-V you better understand these interlocking components REALLY WELL. You’d better get them right, because removing them is not easy.

One dialog box that I became intimately familiar with was the “View Dependent Resources”. That’s because I wanted to remove my initial uplink port profile, but couldn’t because it was used elsewhere. Most of the networking constructs created in this process have dependencies generated.

Now I’m not saying VMware doesn’t have dependencies, all enterprise software does. Anyone who has tried to remove an external network or organization network in vCloud Director or tried to remove an ESX host from a Distributed vSwitch will know what I’m talking about! I just feel that with the range and scope of these different networking constructs there’s a level of complexity that might be undesirable in a virtualization environment. On the plus side I think it is quite good that SCVMM has this “dependencies” view, although I think it’s a tacit admission of the complexity. It’s fair to say that VMware vCenter and vCloud Director doesn’t have a view like this, and it could be useful for diagnosing problems or working out the relationships that exist. In my own mind I’m trying to work out the relationship between the various network constructs in SCVMM. As I see it they look like this

VM >>> VM Network >>> Logical Network (Site-VLAN/IP Pool) >>> Port Profile >>> Logical Switch >>> Hyper-V Server >>> Physical NIC/Team

If I were doing similar mapping in VMware vSphere the relationships would look like this

VM >>> Portgroup (with VLAN Tag) >>> vSwitch >>> Physical NIC/Team

In vCloud Director the mapping would be more abstracted:

VM >>> vApp Network (Optional) >>> Organisation Network >>> External Network

Backing the vApp and Organization Network would be a network resource pool type (VLAN, VXLAN or vCDNI) and bundle of IP ranges associated with each network. The organization network portgroup would be automatically generated and assigned from a pool, and the external network portgroup(one per Org) would be manually created.

To tell you the truth I’m a bit confused by Windows Hyper-V Networking – and I’m not sure whether it’s my lack of familiarity with it, or overfamiliarity with VMware vSphere that’s the cause. Familiarity with a technology can lead to it becoming almost second nature to you, just because the more you use it the more obvious to you it becomes.

But it strikes me that whereas VMware has a single wizard that guides you through the process of creating a vSwitch, Windows Hyper-V has innumerable wizards to break though – and I was following a well written book that led the way. What I’m left feeling is that I went through many, many steps to get where I wanted to be. My other concern is given all these dependencies, locating and reconfiguring these settings becomes harder – as you hunt for the needles in the haystacks. The other worry I have is how difficult it might be to migrate from the Windows Hyper-V Standard Switch to the Logical Switch. Whereas VMware has a migration tool to port your Standard vSwitch settings and VMs over to the Distributed vSwitch there doesn’t appear to be an easy migration wizard in SCVMM to handle this possible scenario. But of course, my bigger concern is the number of steps the SysAdmin must walk though for what would seem like relatively trivial tasks.

Perhaps a good illustration is to set a common task. Lets say a new collection of VMs is being created and the application owner and business has demanded (rightly or wrongly) that these VMs should reside on their own VLAN. They have some mixed-up, crazy notion that somehow by having these VMs on their own dedicated VLAN they will be “more secure” as in their minds security and VLANs have become conflated. Where would you go to define a new VLAN in Windows Hyper-V? If you have read this blogpost closely it should be obvious.. or maybe not

[I’m waiting…]

The answer? First you need to modify the properties of the logical network, and add in a new VLAN to the Site specifying both its name, the Windows Hyper-V Hosts that will have access to it, and the VLAN ID and subnet range.

Then, optionally you could create a new IP Pool and associate it with the Site/VLAN.

The third and final step would be to define a new “VM Network” to utilize the new Site-VLAN definition.

Phew!

As a long time “virtualizationist” I’m clearly a big fan of the concept of abstraction. The hypervisor is the primary layer of abstraction – separating the underlying hardware from the virtual machine. But there are other layers of abstraction as well that are meant to make life easier. A classic example is the NIC teaming being done at the hypervisor level, which means you can dispense with having to configure NIC teaming in the guest operating system.

But despite being a big fan of abstraction, I have an underlying anxiety associated with it as well. I worry all those layers of abstraction could just add layers of complexity, obscuring the relationships between the virtual machine and the outside world. The question always has to be asked – do additional layers of abstraction add, subtract or detract from the overall management experience. After all no one wants “absubtraction” or “abdetraction”. But it seems to me that this is the future especially once true network virtualization takes hold in the form of technologies like VMware NSX. It’s seems clear to me the simplistic relationships between the VM and physical world are disappearing. Where were heading to is a world where there will be a lot of flexibility, but with that will come a greater complexity where will just have to accept the underlying physical world has been “taken care off” and we focus on our immediate connectivity to the VM.

(absubtraction” or “abdetraction are new words by the way. I just made them up. I can be like that sometimes. I’m sorry).

Laying aside these rather obtuse arguments for and against layers of abstraction. My brief experience of Windows Hyper-V networking has suggested one observation. Microsoft put quite a large emphasis on converged networking. Now as we all know converged network is generally wonderful technology, but sadly not one that all customers have access to. Occasionally, I’ve wondered if this emphasis on converged network is a tacit admission by Microsoft – that without it you would have your work cut out to build out a Windows Hyper-V environment that would have all the availability you require whilst at the same meeting the traffic separation many of the validation tools require. I’m thinking specifically of Microsoft Failover Clustering. That my friends, is quite another proposition altogether and one I will be looking at in my next thrilling instalment of my Travels in Hyper-V R2eality.

Addendum: Adding new hosts to a pre-configured environment

It’s common in most enterprise software for it take sometime to stand-up, but once your using on a daily basis the admin cost goes down. It’s called in the trade “Total Cost of Ownership”. I vile and unspeakable term that has become overused in our industry, to the degree it has been hollowed out of all meaning.

In this article I asked the question about setting up a new VLAN. But I’ve more recently been adding more Windows Hyper-V hosts into my SCVMM. They reside in the SAME site so there for would require access to the same/similar networks. Rather than defining a brand new logical network/switch and so on. I decided to “reuse” my existing configuration – which has the lowest penalty in terms of admin. OR at least I thought I would.

1. Add the Windows Hyper-V hosts into SCVMM

2. In +Fabric, +Networking, +Logical Network – on the properties of the Logical Network, add the new hosts into the definition

3. On the properties of the new host, assign the Logical Network to the physical interfaces used to access them – enabling which site/subnets/VLANs that should be accessible.

4. Next assign the Virtual Switch to the new Hyper-V host, select the right physical NICs from those that are available.

5. Repeat and Rinse for every new host in the cluster.