I am pleased to say I will be playing once again at the Queen’s Head, Belper, on Jan 18th. It’s a split bill (which means […]

My year in music

So this is a post about two things – a round-up of my year in music, both being a player and listener – and some […]

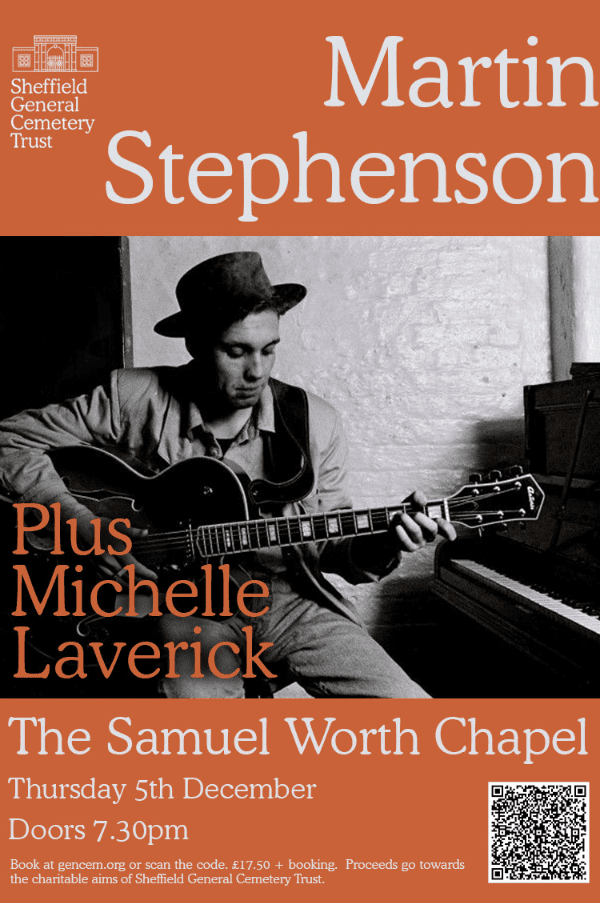

GIG: Supporting Martin Stephenson in Sheffield

So my last gig of the year is supporting Martin Stephenson at the Samuel Worth Chapel in Sheffield. I think tickets are sold out now […]

BBC Introducing: The Butchers Daughter

So pleased to be back on the BBC Music Introducing in The East Midlands again with a song from my debut EP “Selkie Child” – […]

GIG: Final Whistle Pub, Southwell: 27th Oct

So, I’ve managed to sneak in another gig amongst the last remaining dates for this year. Malcom Slater, who is closely involved with the Gate […]

A Walk Around Seal Sands, Teesside and the Song Selkie Child

So I recently bought a gimbal for my phone, for talking short videos when I’m out and about. Last weekend I was in Teesside for […]

Live at Band at the Wall & Playing Acoustic Support

Last Sunday, I saw myself playing support again for Martin Stephenson and the Danties (yes, the full band!) at Manchester’s Band in the Wall. I’ve […]

Hartlepool Folk Festival – 2024

After finishing up at the songwriting retreat, I headed South to Hartlepool. As ex-exiled smoggie from Teesside, I can’t explain how weird the concept of […]

Official KLOF Magazine Review of Selkie Child EP

I’m thrilled to say that the influential KLOF Magazine have reviewed my debut EP. The review was done by Nigel Spencer, formerly of Folk Police […]

May you find…. humankind…

So, the last two weeks have been a bit of a whirlwind for me – so much so that it’s tempting to dump it all […]