So, last Friday, I released my debut EP, and I’m pleased to be already getting early feedback and reviews from the folks who pre-ordered the […]

Folk East Finds

So, a couple of weeks ago, I attended my first-ever folk festival (which sounds like a Fisher-Price toy). It’s also a bit unfair because I […]

Michelle Laverick (EP) and Bex Fawn Johnsone (LP)

My friend Bex (Fawn Music) and I are planning a series of joint gigs in Derby, Wirksworth, and Sheffield over the next few months. […]

The Nick Drake Gathering – 2024

So this weekend I went to my very first Nick Drake Gathering, and I’d have to say I enjoyed myself immensely. It was my first […]

Supporting Martin Stephenson and the Dainties

I’m so pleased to announce that I will be supporting Martin Stephenson (and the Dainties) on his autumn tour. My brother and I are overjoyed […]

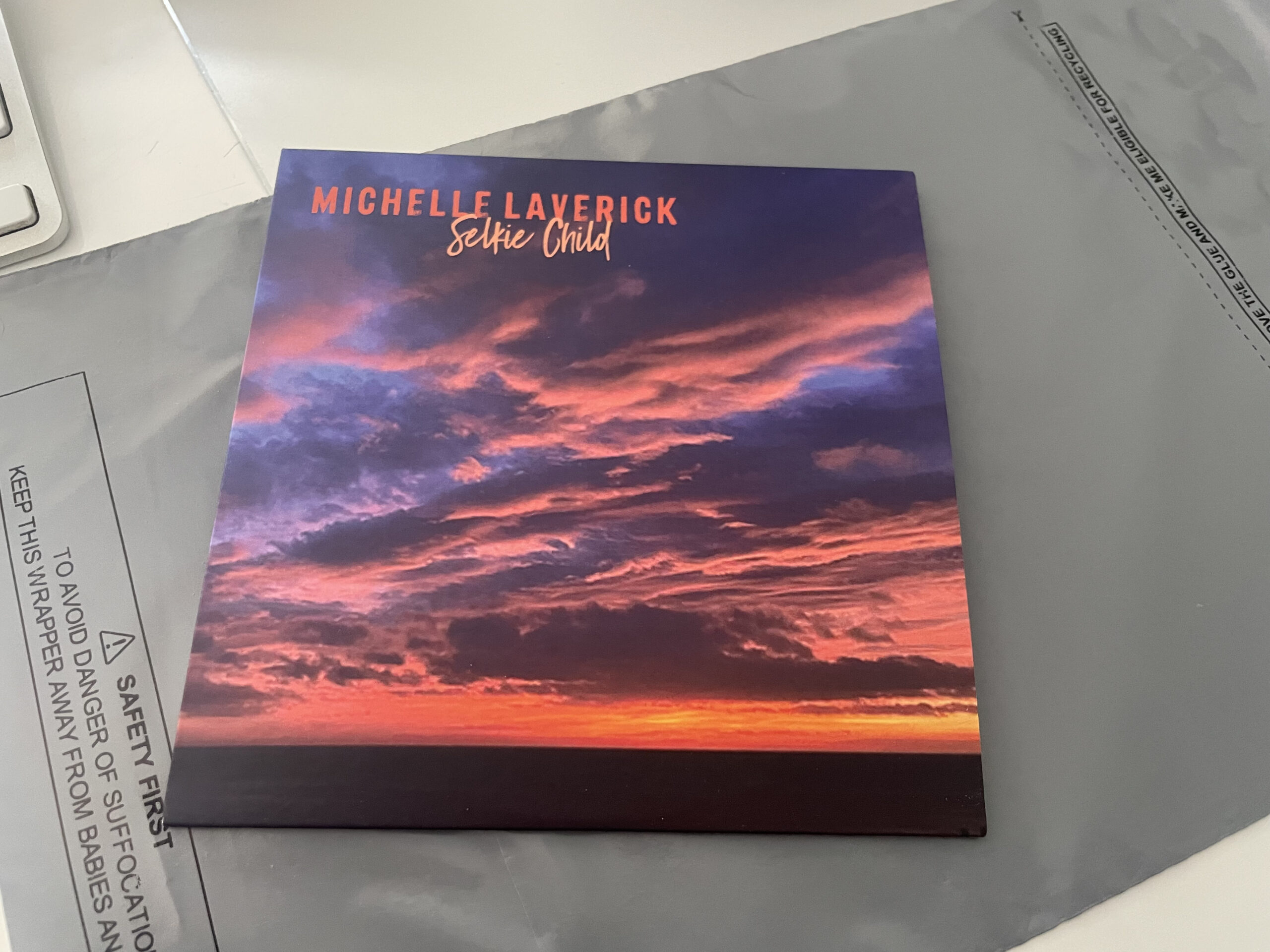

Silkie Child EP

Welcome to the landing page for Silkie Child, the EP. EP Booklet: Michelle Laverick – Selkie Child Digital Booklet EP Release My debut EP is […]

Supporting Soap Box Preacher

Supporting Soap Box Preacher I am pleased to say I will be supporting Soap Box Preacher at their gig at the Railway Arms, Belper Date: 21st July, 2024, […]

Gateway to Southwell Festival

Gateway to Southwell Festival I really stoked to say I made the cut to be placed in the open-mic slot at this year’s “Gateway to […]

An Evening with Tom Robinson and Frankie Archer

I’m feeling rather lovely and tired and jaded after my weekend – a certain sign that it was a good one – as I cheerfully […]

Photos from Samuel Worth Chapel Gig

Some friends where very kind to take some photos of me performing at the Samuel Worth Chapel in Sheffield last month. I put them on […]