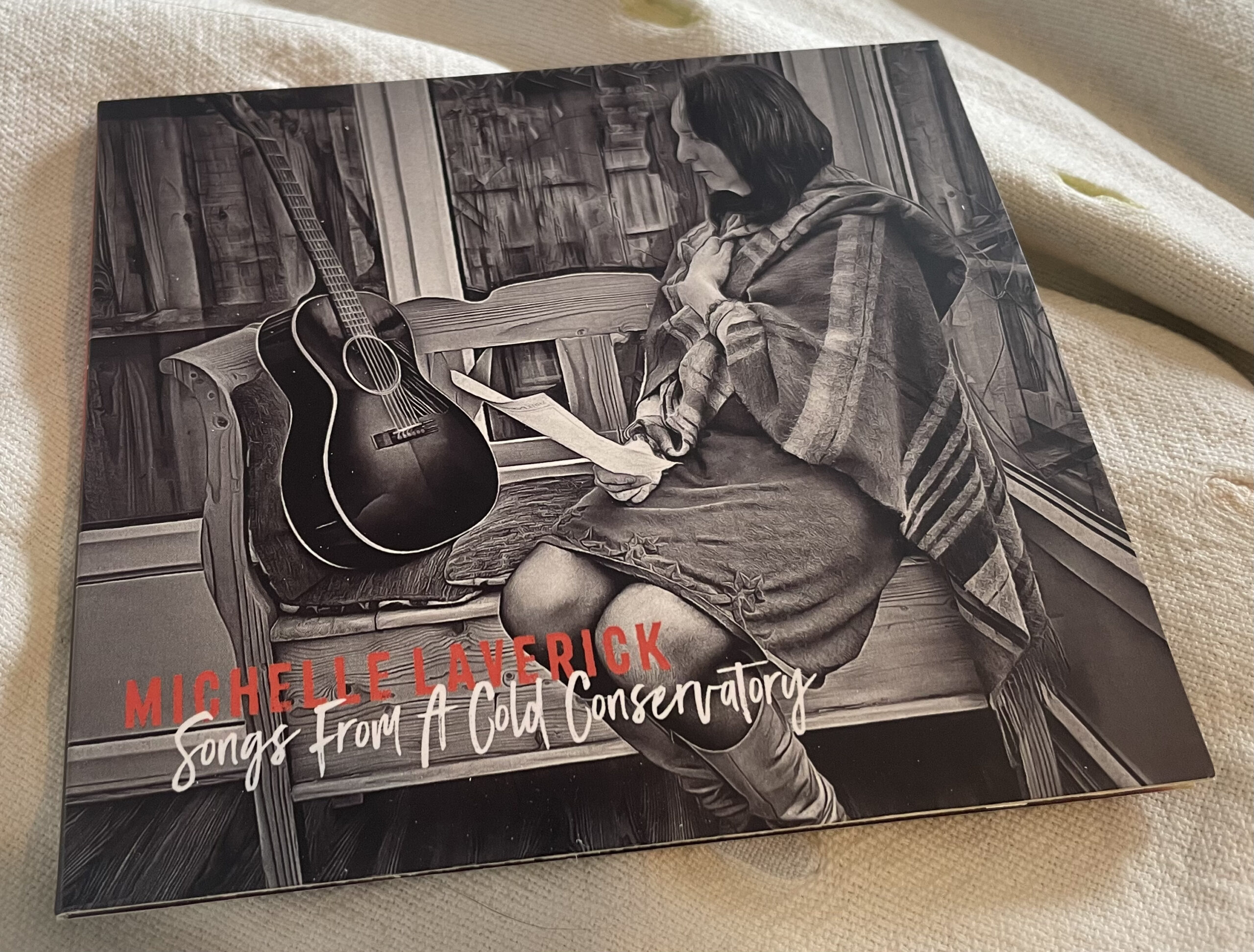

Songs From A Cold Conservatory by Michelle Laverick I’m delighted to announce that my debut album is available today. I spent last weekend fulfilling the […]

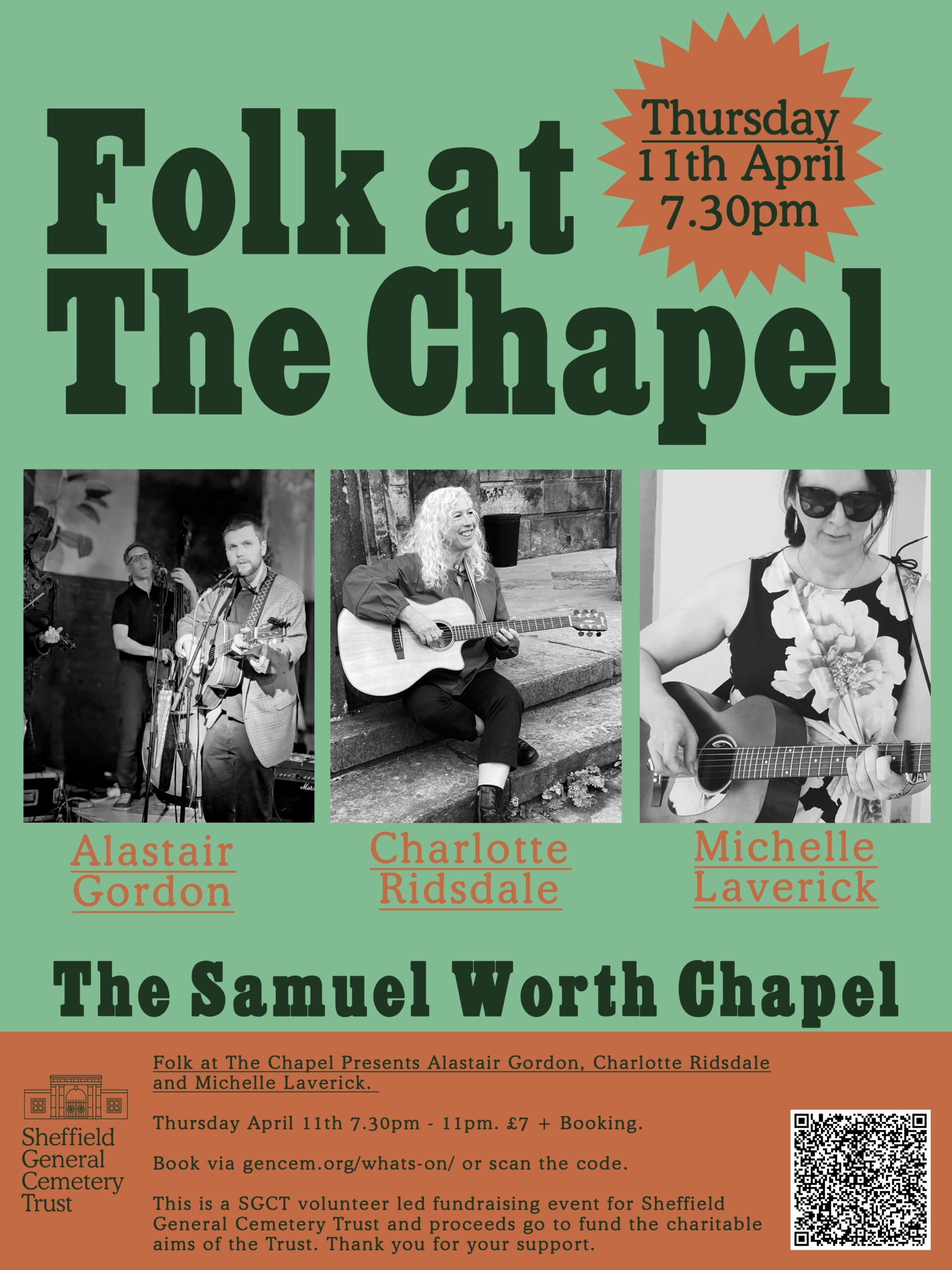

Gig: Sheffield, Samuel Worth Chapel, April 11th

Just wanted to remind folks that I will be playing in Sheffield in a couple of weeks at the rather marvellous venue of Samuel Worth […]

DEMO: No One Cried

So this is a new demo from yours truly. I should say ‘demo’ means it was recorded at home with minimal post-production and not in […]

DEMO: September has gone

Opps. I put this demo out 2 months ago and forgot to blog about it – it’s called “September has gone”. I wanted to do […]

BBC Radio Derby in Session

Last week I was on the radio every evening playing a song and talking about my music. It was a great opportunity – made the […]

DEMO: This song is for you

I wrote this little ditty at a songwriting workshop with Edwina Hayes in Melbourne, Derbyshire. I came with the music in my head, two lines […]

DEMO: Butcher’s Boy

This is a demo of the traditional song ‘Butcher’s Boy” to which I’ve put a different tune and also changed the words around a bit […]

Derbyshire Sessions “Tour”

In all honesty, I’m probably not a very good “independent musician” as I’m uncomfortable with the concept of “hustling” for gigs and opportunities to play […]

BBC Radio Derby: Evening Show with Martyn Williams

So last night I was on BBC Radio Derby with my song “This time the darkness wins” (rapidly being dubbed “darkness” with my sound guy, […]

Radio Radio Radio…

I recently made my radio debut on BBC Radio Introducing (in the East Midlands) broadcast on BBC Derby, Nottingham and Leicester. It just shows ‘accidental’ […]

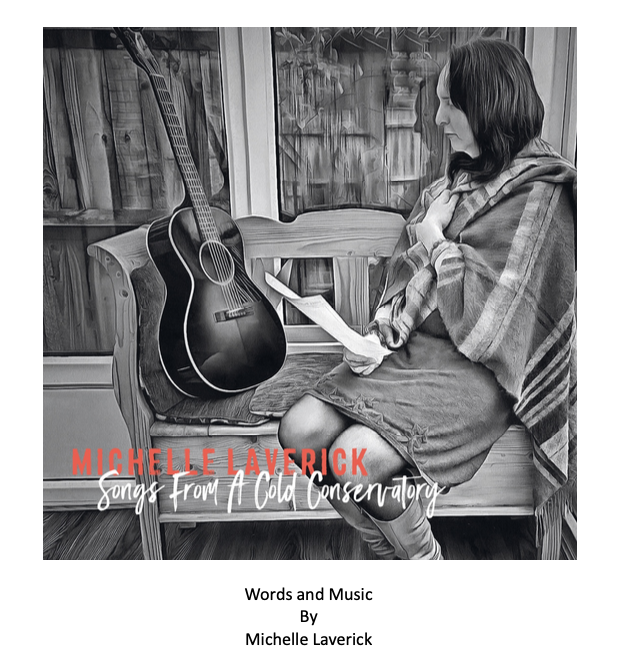

Words & Music: Songs From A Cold Conservatory

So musical friend expressed an interest in some type of record of my lyrics (and music). I avoided getting a booklet made for the CD […]